Complex Data Capture: Leveraging MapInfo and FME

- March 28, 2018

Many thousands of polygons, snapped to a variety of backdrop layers, with a full set of attributes, fully checked and cleaned of any geometry issues, plus the delivery date is in a few weeks? No problem.

Gamma recently carried out a large-scale data capture project which involved map digitising, quality control and data production for a client. We brought together the data creation capabilities of MapInfo Pro and the automation functionality of FME and delivered the project to spec, on time and within budget. To quote the client “we couldn’t be any happier with the quality of the mapping and service delivered”.

Create, Check, Fix – working with spatial data in MapInfo Pro

MapInfo and FME

While we could have met the project spec requirements using MapInfo Pro alone, we leveraged our FME capabilities to automate large parts of the data processing element of the project.

FME is a data integration, transformation and automation tool with powerful spatial data handling capabilities. Its functionality and flexibility make it ideal for supporting data capture projects.

In this project, the data capture requirements were unique for each area and required interpretation of paper-based drawings. FME is best suited to processing entire datasets rather than authoring actions for individual features; therefore MapInfo was chosen for the main data capture work.

The area where FME really added value to the project was in the automation of tasks required to be carried out on the captured data before it could be released to the client.

The tasks that were automated using FME can be grouped into the following headings: (1) Data cleansing; (2) Data validation; (3) Data capture error reporting; and (4) Data enrichment.

1. Data Cleansing

An automated process was developed in FME to cleanse captured data. Apart from area ID’s only spatial data was captured by the team using MapInfo, so the cleansing process focused on the spatial properties of the data.

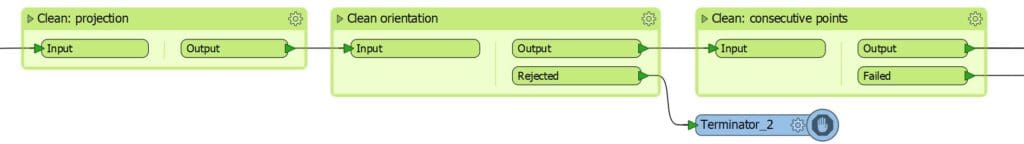

The order of the cleansing transformations was important. The least invasive transformations were carried out first, e.g. adjusting the polygon orientation, removing duplicate consecutive points etc.

The goal was to get the data in the best shape possible before the more heavy-handed transformations were carried out. This increased the effectiveness of the transformations and reduced the risk of introducing new errors into the data.

After this first batch of light cleaning the data was put through a more aggressive cleaning process. Common geometry issues were fixed including self-intersections, slivers, spikes etc. The order of the transformations was important here as well.

2. Data validation

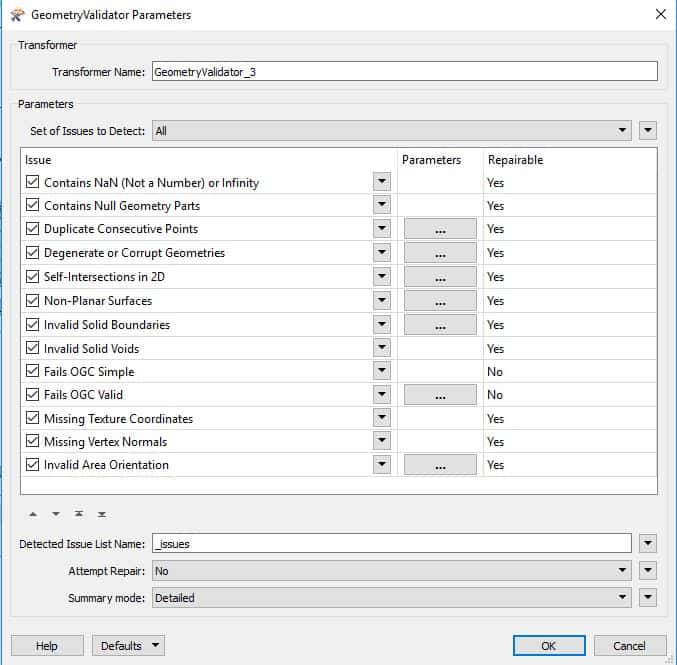

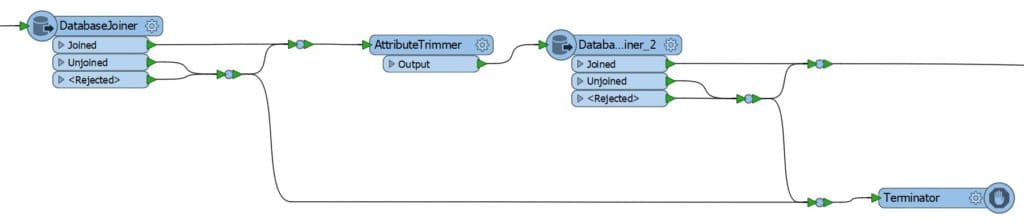

During the cleansing process, FME checked the data for validity after every cleansing transformation. Before the data was deemed valid, it was put through the full set of validation checks in FME’s ‘GeometryValidator’ transformer.

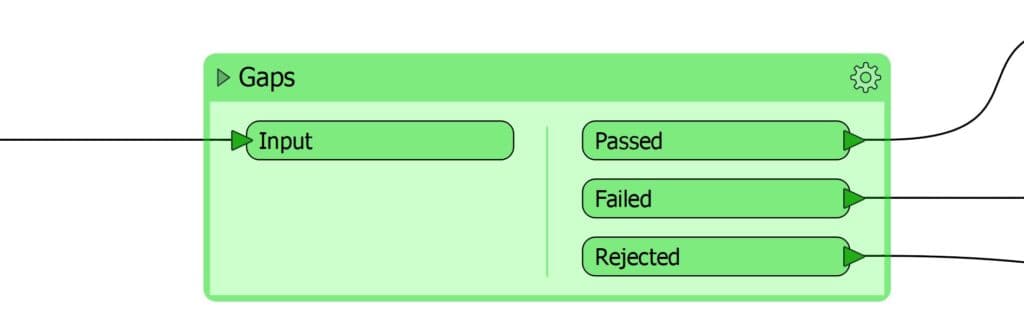

It was also put through a series of custom checks built by Gamma, e.g. records counts, overlaps, duplicate features, gaps, geometry type etc.

3. Data capture error reporting

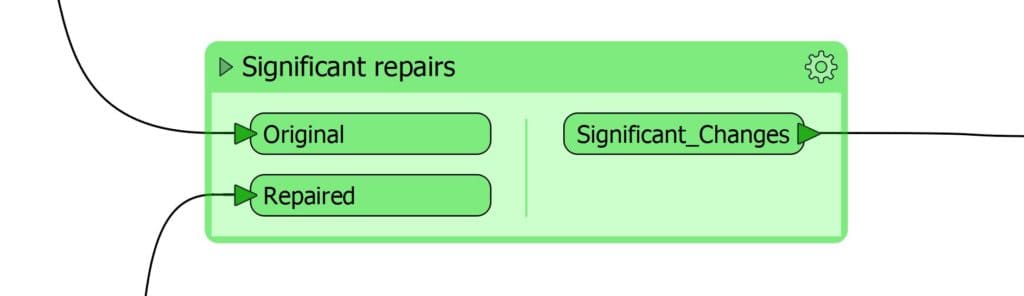

To avoid the risk of over-transforming the data, FME was not solely relied upon to cleanse the data. FME was used to do repairs on the data, not to make significant changes.

If at any point the data failed a validation check the feature in question got filtered out for manual fixing in MapInfo. To assist the data capture team, FME produced a point dataset containing the exact location of each error and a description.

FME also created another dataset showing where a signification number of vertices had been altered during the automated cleansing process. These were reviewed and either accepted or rejected by the data capture team.

This data capture, cleansing, validation and review process was repeated until the data met the validity requirements.

4. Data enrichment

The data was then enriched by styling and adding attributes to it using an automated FME process. Automating the styling meant any updates could be incorporated easily. An alternative approach would have resulted in many man-hours being spent updating every feature on multiple occasions.

FME populated the attribute information using a look-up table for static values and calculated area attributes on-the-fly.

Finally, a series of outputs could be created based off the same data using FME e.g.

- A dataset containing all features.

- A dataset with just the external boundaries of sets of features.

- A dataset with just the bounding boxes of sets of features.

Benefits of blending FME with MapInfo in your workflow

- Using automated FME processes ensured the data was cleansed and validated in a consistent and reliable manner.

- The FME processes were quick to develop and had the flexibility to facilitate ongoing enhancements to the process.

Every automated repair carried out by FME was a task which would have had to be carried out by an operator. The time saving was considerable, and the quality of the finalised data was extremely high. To quote the client once more “we are thrilled to have such a high-quality product”.

If you have a complex data capture or analytics project be sure to contact us to see how we can help.

@ 2018 Gamma.ie by Michael Fitzpatrick

About Gamma

Gamma is a Location Intelligence (LI) solutions provider; we integrate software, data and services to help our clients reduce risk through better decision-making. Gamma was established in 1993 and was the first company to develop LI for the private sector in Ireland. The company has expanded to become a global provider of cloud-hosted LI systems, micro-marketing solutions and related services.